iLife '09 and Face Detection in iPhoto

I have been asked numerous times in the last few weeks how I feel about the new iLife 2009 suite, and I have been noncommittal at best due to lack of time to actually work with the apps. No more, I say. The last few days I have delved deep into the app usage, and I am quite impressed. Though the changes are not monumental in terms of features etc, they are a real step forward – iPhoto and iWeb in particular.

The buzz on iPhoto has been around its new Face Detection and Locations features. iPhoto goes through a composite of one’s pictures and identifies faces based on a learning algorithm, helped along by error correction initiated by the user. To kick this feature off, one needs to identify a specific face in any picture. iPhoto will then go on to classify the unique characteristics it finds in the face one identified, to collect images in the library it computes are the same person.

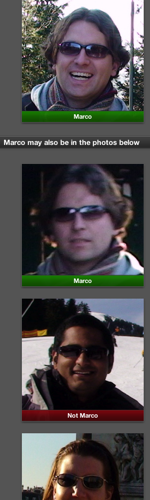

Sounds really impressive on paper, so I decided to take it for a whirl. The results were a mixed bag. When identified properly, i.e. a full face-on shot of say a person X, it will correctly locate pictures of X. However, if person X is wearing sunglasses or other accessories, iPhoto will err repeatedly, as can be seen in the screen-shot below. I identified a former colleague of mine, Marco, in a picture, and iPhoto went off on a tangent, erroraneously concluding all who wore sunglasses were Marco.

On the flip side, I identified a picture of my little niece in a clear shot, and iPhoto was able to correctly identify her from the time she was a few months old. She’s 2.5 now. Very impressive.

Locations works just as well. One does need to have a GPS capable camera (an iPhone for instance), or a GPS device, that can embed Geo codes in the EXIF data of the photo, for iPhoto to automatically determine the location of the picture. Or one can choose to specify the location manually – a tedious task when one has 10,000+ photos (from 2008 alone like I do).

More on iWeb next time.